|

Besides all the horror that comes with any war, we know that the most recent Russian invasion of Ukraine made an immense impact on energy (geo)politics. Economic historian Adam Tooze used the term 'polycrisis' to describe the sort of chain effect that comes from complex situations such as these, as well as the problems connecting environmental issues, public health emergencies and geopolitics that we have seen in recent years. I am following the current energy crisis closely due to its significance to my research object, but also due to a general interest I have in what this issue means for the political debate in terms of long-term global sustainability vs. short-term energy (and state) security.

The war also impacted the games industry in several ways, and in much more depth the data center industry. There are two cases that I could mention to exemplify this. First, the impact on the expansion cloud gaming platforms: although there are some national-based actors investing in this more residually, namely Vodafone in the UK or Magenta in Germany, most of the investments on the infrastructure are done more incisively by private transnational conglomerates (i.e.: Amazon, Google, Microsoft, Sony, Nvidia). Since the sanctions on Russia started to take effect at the beginning of the last year, we saw one of the most prominent actors in the sector, Google, publicly claiming to be "deprioritizing" gaming within their huge portfolio of services. In September there were also rumors that they were selling their cloud platform technology, Google Stadia, to another company, and the sale is really taking place at this moment. Now what is interesting to me is that in press releases Google claimed that the sale of Stadia was driven by a lack of interest from gamers. This sound very awkward, because if one follows the reports from the Games Industry (like I did) in some of the most heated markets for gaming worldwide (namely the US, Japan, Germany...), the reports say the complete opposite. They show an increasing demand for these kinds of services, and a growing acceptance of cloud platforms for gaming purposes. So I am very suspicious that the information in the press releases from Google is just an excuse (good old press release, isn't it?). Given that the cloud model requires that computer processing and storage are outsourced from the home of customers to those who manage the infrastructure, the energy costs would also be on the side of the cloud infrastructure provider. I am tending to believe that Google is giving up some of its most energy-consuming services, like Stadia, due to foreseeing an enduring conflict and growing tensions regarding energy trade, knowing that this would make their business model very risky economically. The second effect is on data center innovation and the experimental developments in cloud technology and transnational energy trades. There is an interesting case I am following closely (and which I did not include in my presentation simply due to lack of time) that refers to a data center built in Hamina, Finland. This Data Centre was built in 2014 by Yandex, a company widely known in the West as "the Russian Google". This is an interesting case because this Russian data center produces a lot of excess heat, and due to an ambitious geoengineering project, it sells the waste energy (heat) to the local Finnish company Nivos Energia Oy, which provides domestic heating services. So the excess heat produced by the data center was used to heat houses in Hamina for several years now, which eventually even led the city to declare they were meeting the goals to reach Net-Zero Emissions in 2030 one decade earlier than expected. This was an interesting case of cooperation that was directly affected by the war. As a response to Russia's invasion of Ukraine, the Yandex data center in Finland has been cut off from the local electricity grid in April, and started running on diesel since then. The Yandex data center changed its name and logo, but it is pretty difficult to assess how are their contracts managed since then. Since June Google (again) has bought loads of land in southeastern Finland to build its sixth data center there, in order to expand its operations in the region. So this is another sign of geopolitical "decoupling", and how it expresses in terms of the competition among tech conglomerates. Indeed, if we think these are symptoms of newly established polycrises, they might as well only be understood through epistemological perspectives which are comprehensive enough and capable of grasp, throughout different established disciplines, the multifaceted imbroglios of the present situation.

0 Comments

As far as the state of the art research for the Cloud Gaming Atlas goes, several articles on media studies exploring the environmental dimensions of cloud platforms and data centres have been reviewed. One of the pieces which really made an impact on my current project is Shane Brennan's Making Data Sustainable: Backup Culture and Risk Perception, an article which was published on Routledge's collection Sustainable Media: Critical Approaches to Media and Environment (2016). I highlighted some parts of the article and also made some comments, as you can read below:

«A culture of backup in which computer users everywhere are expected to copy all of their important files on a regular basis to multiple platforms, including online or “cloud” remote server–based storage. Only then, supposedly, will our precious data outlast a multitude of disasters--from fires and floods to errors both human and hardware—and we may achieve the common goal of digital continuity, regardless of the energy costs involved». 57 «As personal backup practices have shifted from more localized forms of data storage, such as the external hard drive, to cloud services, a different idea of backup as a risk-mitigation strategy has emerged. The geographic redundancy of the cloud—in which several copies of a file are spread to at least one, far-off data center—is seen as a way of making information more “sustainable,” in the sense of maintaining it over the long term against various kinds of disruption. But this digital “sustainability” is achieved by multiplying the amount of data stored in energy-consuming server farms». 57 «The cultural appeal of personal cloud backup over external hard drives is symptomatic of a broadening of risk perception in what Ulrich Beck has called the “risk society,” a moment in which we become aware of and must learn to manage a range of mostly intangible, human-caused dangers. The prevailing attitude in the risk society, Beck notes, is “negative and defensive . . . one is no longer concerned with attaining something ‘good’, but rather with preventing the worst.”» 58 «Answering these questions requires bringing together two different ideas of sustainability—digital and environmental—and asking what their surprising incompatibility can tell us about how to reconcile our digital preservation habits with the vital need to preserve our collective habitat». 59 «Concentrating in places where breakdowns are seen to be especially likely, undesirable, or both, backups mediate cultural understandings of risk and vulnerability, offering a way to study how these perceptions get materialized in various infrastructural systems». 60 «As Paul Edwards describes, the Semi-Automatic Ground Environment (SAGE) air defense infrastructures built by the US military in the 1950s combined redundant electrical generators and concrete enclosures—structures that echoed the Cold War’s “political architecture of the closed world”—with backup computers for analyzing radar data Each SAGE center, he explains, had “two identical computers operating in tandem, providing instantaneous backup should one machine fail.” In these systems, Cold War anxieties about national security and threats to human life became entangled with fears about the unreliability of computing and the vulnerability of digital information». 60-61 «Around the same time, data backup techniques responsive to Cold War anxieties were developing in the corporate sector. Iron Mountain Atomic Storage (founded in the early 1950s) harbored copies of sensitive business documents from nuclear threats inside a disused iron mine». 61 It might be interesting pointing out here that IRON MOUNTAIN is nowadays an important actor when it comes to providing data centre infrastructures. «While this idea of security has roots in the Cold War era and elsewhere, it continues—in some notably direct ways—in cloud infrastructure. As Hu explains, “many highly publicized data centers” repurpose old military bunkers: “Inside formerly shuttered blast doors meant for nuclear war, inside Cold War structures . . . and inside gigantic caverns that once served as vaults for storing bars of gold, we see the familiar nineteen-inch racks appear, bearing servers and hard drives by the thousands”.» 61 «Home broadband connections allowed companies to market remote online—rebranded as “cloud”—server based data storage and backup as a desirable commodity for individual users, not just corporations.19 “It used to be that we kept our data on our (actual) desks,” observes Andrew Blum. Now, “the ‘hard drive’—that most tangible of descriptors—has transformed into a ‘cloud,’ the catchall term for any data or service kept out there, somewhere on the Internet.”» 62 «In addition to being a matter of cost and convenience, the growing popularity of cloud backup reflects a collective sentiment that the cloud—and more precisely the dematerialized, geographic redundancy it represents—is a safer way to store information in the contemporary world. (...) The critical difference is WHERE those drivers are located from the perspective of the user.» 63 «By dispersing data copies to more than one “bunker,” cloud backup increases the odds that at least one copy will escape these network “contagions.”» 64 «The rhetoric of World Backup Day similarly frames data loss mitigation as a nearuniversal responsibility, departing from the top-down logic of older state- and corporate-led backup systems. Everyone is charged with protecting his or her own data, even though access to the cloud and digital backup, like many other kinds of security and technology, is far from universally distributed.» 64 «Part of the attraction of the cloud metaphor, in addition to making digital infrastructure seem magically “green” and virtual, is the image of airborne mobility that assuages fears about data impermanence.» 65 «At the same time, cloud storage can be used to subvert legal and territorial limits. In 2012, the peer-to-peer file-sharing site, The Pirate Bay (TPB), uploaded its entire operation to several commercial cloud-hosting providers, making it more resistant to, or backed-up against, the disruption of police raids. “Moving to the cloud lets TPB move from country to country, crossing borders seamlessly without downtime,” the group explained. Both in terms of where information is stored and how it moves across transnational networks, geopolitical conditions are still important to the conceptualization and implementation of backup in the cloud era, just as they were during the Cold War.» 65-66 «Backup is also no less material today than in previous decades. As Stephen Graham attests, digital media infrastructure remains absolutely reliant “on other less glamorous infrastructure systems—most notably, huge systems for the generation and distribution of electric power.” Moreover, the geographic spread of data centers may make it more difficult to pin down their energy use.» 66 And then Brannon lays out one of the most central arguments of the article: «Bradley defines the more recent formulation of “digital sustainability” as providing “the context for digital preservation by considering the overall life cycle, technical, and socio-technical issues associated with the creation and management of the digital item.” This approach recognizes that information can never be truly permanent but can only be sustained through an active process of building and continually maintaining costly, energy-intensive infrastructures that preserve “valuable data without significant loss or degradation.” A crucial paradox arises, however, since “digital sustainability” is essentially at odds with—and its implementation may very well undermine—environmental sustainability, which “creates and maintains the conditions under which humans and nature can exist in productive harmony.” The adoption of “sustainability” by digital preservation discourse works to obscure this conflict, making it seem as if ensuring the “life cycles” of data is akin to, and in line with, protecting biological life and planetary ecosystems. Although some data centers are powered in significant ways by renewable energy like wind and solar, this does not account for the “embodied energy” that went into building these infrastructures and the electricity used to access remote servers on the user end, much of which still comes from the burning of fossil fuels. In addition, data centers rely on industrial backup systems such as massive arrays of polluting emergency generators, which must be tested regularly. These generators are designed to mitigate the risk of disruption from a power failure and meet clients’ demands for 24–7 access. Entire data centers are also “backed up” in the form of duplicate physical and digital architectures— known as disaster recovery sites—increasing energy costs even further.» 67 Disaster recovery sites and systems is an excellent theme related to my research which might be interestingly expanded towards a new project: Resource gaming. Mel Hogan also has an important contribution to the study of the environmental entanglements of data centres:«Mel Hogan examines “the potential environmental costs of our everyday obsession with selfarchiving” on Facebook as well as our expectation of instantaneous access. “The cost of such instantaneity,” she argues, “is that almost all the energy that goes toward preserving that ideal is, literally, wasted,” since most servers idle in standby mode— itself a form of backup—ready to meet sudden demand. Her analysis reveals Facebook to be a wasteful archive, a paradoxical system that consumes exorbitant amounts of energy in order to “sustain” information and our ability to access it.» 68 And here Brannon underlies an important statement considering the cultural implications of the data centre ecology. More importantly, he highlights how the study of cultural tropes is essential to understand the complex and paradoxical situation of how our dociety came to rely upon high energy-consuming data centres to sustain our mediatized daily routines: «Yes, a data center may be power-hungry and inefficient, but this is in part necessitated and justified by our unsustainable “digital demands.” The field of environmental media research, in other words, must address sustainable cultures of media use alongside and in dialogue with concerns including data center design, electronic waste, and the question of how media become tools for “environmental communication.”» 68 «It is difficult to know what constitutes acceptable versus excessive redundancy, in part because there is a lack of transparency about precisely how many times our files are replicated online and where they end up.» 69 «As a way of defining organizational relationships in response to perceived risks, backup is a historically specific concept and media practice. (...) The key paradox is this: to protect our data from the threats associated with climate change—such as storms, floods, and drought-induced wildfires—we multiply and distribute our data across a vast network of server farms.» 69 «Climate change and globalized risk perception have already radically expanded what is seen as requiring backup. The Svalbard Global Seed Vault, for example, stores duplicate copies of crop seeds in a Norwegian Arctic mountainside in order to be able to “restart” agriculture after a large-scale disaster, recalling both Cold War bunkers and high-latitude data centers.66 The Atlantic called it “the world’s agricultural hard drive,” and, according to its director, “the seed vault is a kind of safety backup for existing seed banks and their collections.”67 But a group known as the Alliance to Rescue Civilization (ARC) takes this idea even further by proposing to back up the entirety of human civilization in a dedicated lunar facility. This backup would include a copy of “our cumulative scientific and cultural treasure chest” and “enough human beings (and supporting species) to repopulate Earth,” thus hedging against global disasters including climate change, nuclear war, and large asteroid impact, explain cofounders William E. Burrows and Robert Shapiro.» 70 «It urges us to reject the fantasy of a fully backup-able world, which can lull us into a false sense of security, promoting inaction. This imperative is represented in a graphic produced by the Climate Reality Project and shared on social media: superimposed on the “Blue Marble” Apollo 17 photograph—an icon of environmentalism made possible by the many backup systems of the Space Race—are the words “THERE IS NO EMERGENCY BACKUP PLANET”.» 72 «Just as the image of the cloud obfuscates the large-scale energy and carbon processes that, by fueling climate change, make the world gradually less safe for our information and for us, even the remote possibility of an emergency backup planet distracts from the irreversible mass extinction and ecological devastation already well underway. It deceives us into thinking there is a separation between Earth’s natural “hardware” and the cultural and biological “data” it supports, that one can be saved without the other.» 72 The first week of November saw Fellows at the Zukunftskolleg have a Scientific Retreat at the Zentrum für Kunst und Medien (ZKM) in Karlsruhe. The ZKM is a cultural institution that gathers scientists and artists from all over the world to collaborate in practical-theoretical projects. The institution also has some of the largest collections of media arts in Europe, with a particular focus on computer and video art. The day-trip to the museum was preceded by a lecture given in the previous day about museums of computing by Peter Krapp (University of California, Irvine), who is also a Senior Fellow at the Zukunftskolleg. The journey started early in the morning, with Fellows arriving in Karlsruhe by train around 13h00. After a pleasant time to discuss and get to know colleagues further during the train travel and lunchtime at the bistro in the Foyer of the ZKM Café, the researchers had guided tours through three of the main open exhibitions at the museums.

First was the exhibition “Walter Giers. Electronic Art”, which spanned the career of the German op-art pioneer, from his early works deconstructing radio equipment, passing through his experiences with computer sensors and body movements, showing also his idiosyncratic pieces of jewelry design. The interactive aspect of Giers' artwork made it a particularly good start for a tour, as Fellows were invited to experiment with some of the pieces. Even though some of the installations could not be repaired to their original state for the exhibition, the noisy technical experiments of most pieces made themselves heard during the nearly one-hour long walk through the guided exhibition. Next was “Lazy Clouds”, the current exhibition of artist Soun-Gui Kim. In a completely different rhythm, the tour through the three main halls which spanned different phases of the artist's career had a much more introverted feel, reflecting the conceptual approach of Kim to the poetic aspects of landscape and her attention to human experiences within natural and social environments. Fellows could follow the evolution of her visual work from the early video interventions in (and struggling with) traditional Korean art, to her more recent renditions exploring the structural tensions and continuities between digital technology and traditional media practices. In the meantime of the guided tours, the participants had the opportunity to observe some of the ateliers and workshops where artists and researchers develop their collaborative work at the ZKM. In the last tour, Fellows had the opportunity to observe how the zkm_Gameplay exhibition was curated. Occupied with the past of digital games, this exhibition presented a diverse selection of historical and creative works from the medium of video games. Within the tradition of ZKM as a media-oriented museum, the curatorial approach of zkm_Gameplay focused on the artistic and technical limits and possibilities of the medium through specific, canonical titles of the history of digital games until the present. Participants could also play some of these titles, mostly by making use of emulators, but also some original consoles arranged by the museum. Before returning, Fellows also took a walk together through the city center of Karlsruhe, visiting the park of the Badisches Landesmuseum Karlsruhe before traveling back to Konstanz at night. Last 28.10 saw the passing of Kathleen Booth. She was a mathematician and a computer scientist who wrote the very first assembly language for computer systems (for the ARC computer).

She started collaborating with a small team of researchers, which included Andrew Booth, who would later develop one of the first rotating storage devices, preceding computer disks. What is interesting to notice in my view is that part of their groundbreaking work was developed in two 6-month research visits at the Institute for Advanced Study (IAS) in Princeton, where they had the chance to work with John von Neumann on his machine architecture, which enabled programs to be stored through a memory function. This experience led Andrew to redesign the ARC, repurposing the relay part of the machine (developing what is referred sometimes as the ARC2). In 1947, while still at the IAS, Kathleen and Andrew wrote two reports about such experiences, General considerations in the design of an all-purpose electronic digital computer and Coding for ARC. The first of those reports outlines several different options for the memory function of the von Neumann architecture machine, describing how to develop it. The second report explains how the instructions are represented in machine language and can be loaded into a storage device. Kathleen would later also turn to research in natural language processing and neural networks. In collaboration with Sonia Fizek (Cologne GameLab), Camila de Ávila (Unisinos), and Emmanoel Ferreira (Federal Fluminense University), I am organising a Special Issue for the Convergências Journal on the history of ecogames and game-like simulations of climate behaviour. Please spread the word around. The call goes like this:

It is very easy to find in discussions of computer games and ecology, especially in times when green games seem to consolidate as a genre within computer game typology, the assumption that gaming can help (if not directly “save”) the environment, by making use of climate change communication, or even by prompting the direct climate action of individuals through gamification strategies. We should not disregard such a drive for change, especially if one considers how urgent and porous the challenges regarding the current environmental crisis are. If biodiversity and ecosystems as we know them are at stake as we venture through the Anthropocene (Dirzo et al. 2014), the voluntary efforts from a diverse set of scientific disciplines and sectors of contemporary society in mitigating the effects of anthropogenic climate change are expected and desirable. It is also not strange that today such impulses for active intervention embody the prospects of gamification, that is, the process of permeation of our society with methods, metaphors, values, and attributes of games (Fuchs 2011), more often than not surrounded by streams of enthusiasm and sheer optimism. Yet, as fresh as they might seem under a presentist perspective, the motivations and utopias to ‘save the planet’ through gaming are not at all new. Methods such as media archaeology (Ferreira 2020, Parikka & Huhtamo 2011) provide us with an opportunity, among other things, to rescue forgotten artefacts which have been erased by the canonical history written over a determinate subject or which were simply forgotten by the constant drive of contemporary societies for novelty. This of course strikes a highly sensitive nerve in the case of computer games, as in discourses surrounding digital media and the information technology industry more broadly, considering the economic and political prominence that technological innovations have in these sectors. Therefore it is no surprise that the past of technical media as such has to be constantly retrieved through careful historical or media-archaeological examination (Reinhardt 2018, Guins 2014, Fischer 2013, Krapp 2011). In this sense, the history of ecological games should not work that differently from the cyclical history of ecological thought, which has been renewed over past decades, and -- not by accident -- re-enacted with much more intensity in recent years throughout different disciplines (Veiga 2019). With this call, we do not wish to merely point with a nostalgic verve to preceding educational ecological games, nor to simply point towards a historical recurrence. Instead, we seek to highlight more specifically how discussions concerning ecogames from the past (as well as their potential and promises for change) are missing from current perspectives on green gaming. By retrieving them, it should also be possible to better evaluate what are the assumptions, as well as the promises, successes and limitations in motivating players to engage with ecological perspectives and environmental action through games. Moreover, this exercise should probe what else can be learned by digging up the forgotten artefacts and histories of educational ecological games and gaming materials oriented toward climate action. For this Special Issue of the Convergências Journal, we are particularly interested in proposals dedicated to discussing the following topics: * Histories of the development of ecological games. * Intersections between the history of games and ecological thinking. * Intersections between the history of ecology and playfulness. * Media archaeological accounts of forgotten ecological games. * Archaeogaming approaches to ecological games from the recent and long past. * History on the role of play in climate change communication. * History of games in campaigning for climate action. * History of in-game ecocriticism. * Deep-times of gaming and natural histories of digital games. * History of resource management associated with game production, distribution and consumption. * History of the relationship of the games industry with regulations and policies toward sustainability. * Material dimensions of game technology as technofossils of the Anthropocene. * Sociotechnical approaches to games as a form of ecological knowledge and of knowing. Deadlines: Article submissions: 30.11.2022 Confirmation of acceptance/rejection: 15.01.2023 Publication: Early 2023 You can find more details of the CFP, as well as the author guidelines for submitting a paper, on the website of the journal: https://revistas.unasp.edu.br/converg.../announcement/view/6 From October to November we will have UCI Professor Peter Krapp as a Senior Fellow at the Zukunftskolleg. During this time, we will work on several mutual collaborations regarding my current project on cloud gaming infrastructures in particular (and networked computing in general). I am currently reviewing a contribution by Peter to the recent CfP I am organising to a Special Issue of Convergências Journal on the history of so-called 'ecogames', and we are also in conversation for a co-written piece on the intersections between the history of climate simulations and in-game weather modelling.

Besides articles, Peter will contribute to the Zukunftskolleg and the department of Literature, Art, and Media Studies of the university by giving a lecture about his current project on museums of computing and internet history. The lecture, which is happening on November 3, should provide researchers with an opportunity to observe and weave together theoretical and curatorial approaches to media history, with a particular focus on the properties of networked computing as an archival medium. In commonplace imagery, computing is constantly leaning towards the future, so it is unusual that inquiries regarding the past of computers rise up to the centre of public perception. Nevertheless, in recent years the history of digital media, software systems, and computer architecture became more and more an object of interest to several museums, with a growing number of institutions aiming to archive and memorialize the past of computing and especially of the internet. Indeed, with computers and digital telecommunication infrastructures continuously permeating more dimensions of social life since the late twentieth century, one could also claim that developing museums of computing, as much as exhibitions of particular digital media by-products, was an inevitable, impending outcome. Whether museums offer the more appropriate possibilities to grasp and present such histories is a different question. Just as institutions and other spaces developed with the purpose of memorializing the past of human civilization, the internet spurs from a plethora of material marks and software traces which are left for narrativization, also bearing a great proliferation of in-situ and virtual museums to account for the early days of networked computation. As far as curatorial approaches go, one can find a proliferation of nodes and indexes which tell their own curated versions of their past. Therefore, the places of memory on the internet, as venues to represent the past, end up facing challenges analogous to those of cultural institutions with regards to the selection of their objects of interest, also performing acts of remembrance, celebration, reinforcement, forgetting and erasure. Through its truly interdisciplinary body of collaborators, which currently also spans researchers with history, memory studies, and computer sciences backgrounds, the Zukunftskolleg will surely also provide an interesting platform for gathering different perspectives on the history of networked computing for Peter's ongoing project on the theme. A lot of work going on in the background the next month. While organising lectures and workshops for the Fall term, the project also provided me with the opportunity to give two talks in October. It has been... well, more than two years since the last time I presented a paper at an in-person event, still in Brazil, so there is of course a lot of expectations and anxieties about it. As for the work per se, the two talks will directly address the Cloud Gaming Atlas project, although from particularly different angle.

On 08.10, at Bischofsvilla, I will give a talk regarding the regulation of temperature in data centre facilities. As part of the Postdoctoral Colloquium of the Literature, Art, and Media Studies Department, this talk will approach cooling and fire prevention systems as important infrastructure involved in a broader "techno-termal" orientation to media in the age of streaming platforms. On 13.10 I will travel to Estonia to present a short paper in the workshop Video games and environmental issues: Current and future challenges, which is part of the next Central and Eastern European Game Studies conference. The paper seeks to entangle the current discussions on 'green games' and 'green gaming' (which are significantly driven by the idea of engaging with climate communication through games, but also with attempts to establish climate councils and approve climate policy regulations within the games industry) with perspectives from media infrastructure studies. The main argument is that the current focus of the discussions over CO2 emissions produced by game developers and publishers, while important to assess the share of studios and further industry actors in the climate crisis, is less than effective to assess the problematic issues of rising energy consumption, which is predicted to escalate with the massive assemblage of geo-distributed data centres for cloud gaming provided by IT infrastructure companies. More updates about the conferences soon... screenshot from Szymon Adamus, Dec 1, 2015. Input lag - what is it and why is it so important (YouTube video). Here are some thoughts and entries written down while doing the annotated citations of the article 'When infrastructure becomes failure: a material analysis of the limitations of cloud gaming services', by Sean Willett:

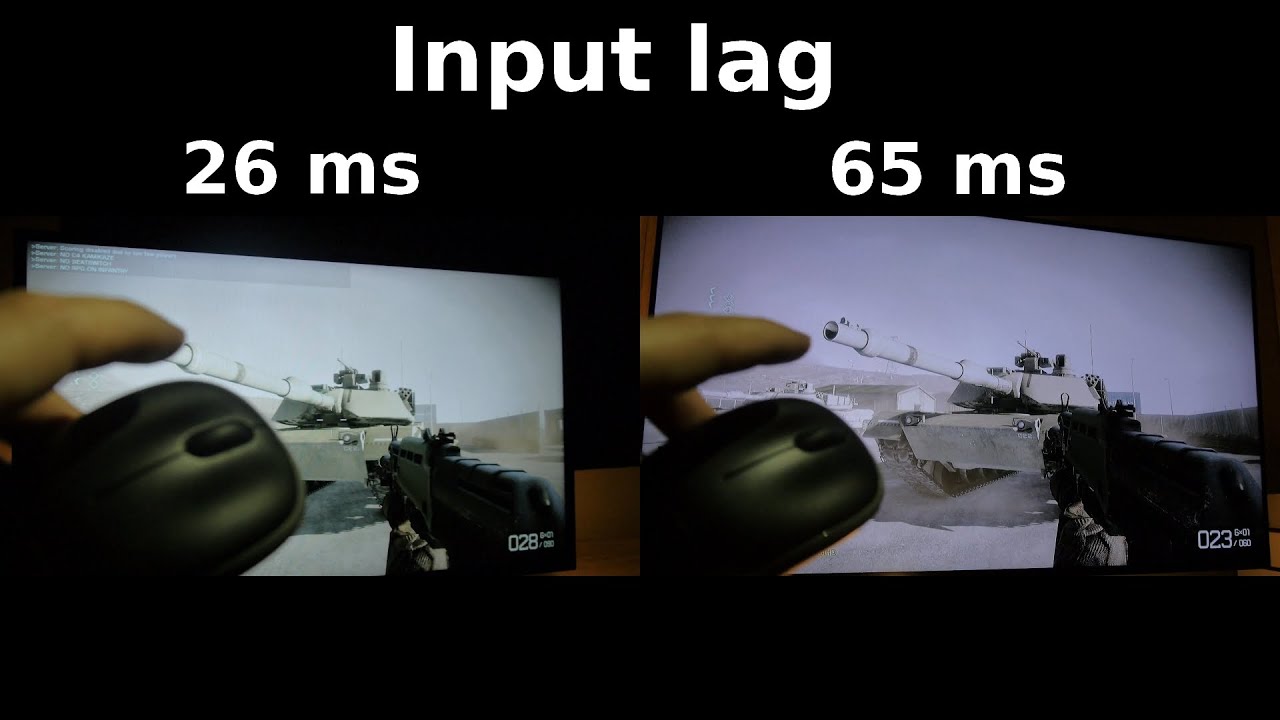

Cloud gaming, again "No longer do people need thousands of dollars and a familiarity with computer hardware to enjoy high-end PC gaming. Instead, they simply need a functioning laptop and $25 for every 20 hours of play to obtain (what is marketed as) a near identical experience to playing on a high-end PC". Here he discusses the diverse attempts to implement cloud gaming. Gaming lagged behind other media venues, though: "The most infamous of these services, OnLive, launched in 2010. As with GeForce Now, OnLive was met with a wave of positive press, with one tech journalist describing the service as ‘feeling exactly as if [he] had installed the software on [his] local computer’ (Bray, 2010). Another described OnLive as ‘the easy path to instant gratification gaming’ (Takahashi, 2010). Audiences did not agree. OnLive struggled to grow its user base despite the positive press, with user reviews citing high input latency and poor picture quality as major deterrents to regular use (Leadbetter, 2010). After laying off all of its employees, the company behind OnLive dissolved in 2012 – only two years after the launch of the service (Hollister, 2012). As other media took to the clouds, gaming stayed firmly on the figurative ground". Then he states the article's main goal: “In the following essay, I describe the physical and technical limitations that cause infrastructure failure, and use evidence drawn from the fields of critical infrastructure studies and game studies to explain why this kind of externally introduced failure is so disruptive to digital games". Infrastructural failure: “Despite what Nvidia’s marketing appears to claim, high latency and subpar image quality in streaming cannot be eradicated with more powerful GPUs. These problems are consequences of infrastructure, caused by the distance between players and data centers, the quality of players’ internet connections, and other material factors – all variables that Nvidia is unable to control”. Then he goes to explain with some detail how the human-machine relationship has to function during gameplay so that the player can feel s/he is playing something. He also describes why input lag is a challenge to cloud gaming infrastructure: "The most obvious of these potential glitches is input lag. When using a cloud service such as GeForce Now, a player interacts with the streaming game by entering inputs using a controller or keyboard. These inputs must then be sent over the internet to the remote GPU running the game, where they are translated into in-game actions. The player sees the effect of their inputs after the GPU’s video output travels back through the internet to be displayed on their PC. This entire process can only happen as fast as the network can allow, with factors like the distance between the player and the GPU or the quality of the player’s internet connection dictating the speed at which this information can be exchanged. If this process happens slowly enough, it manifests as input lag: a noticeable delay between a player’s input and the corresponding in-game action taking place on their screen. This delay can sever the already tenuous relationship between player action and perception, a relationship all gameplay is ultimately dependent on. (...) [players] must instead be able to perceive in-game events as the direct consequence of their actions. If perception can no longer be relied on by the player, then gameplay itself can no longer exist". "Another problem is video compression. As computer hardware becomes more powerful and games continue to strive for graphical fidelity, the amount of bandwidth needed to transmit a video feed from a big-budget game also grows accordingly. This is not much of a problem for people with access to high-end fibre-optic networks, but bandwidth limitations can result in people in rural or undeveloped areas being locked out of the service. Since cloud gaming providers do not own the infrastructure they use (não contava com Stadia e Amazon), and thus are incapable of upgrading internet cable networks to allow for higher bandwidth limits, they must instead use encoding algorithms to compress their video output to an appropriate size. Ideally, this compressed version of the video output is then decoded and expanded by the player’s PC. However, if the network speed drops, the player’s PC will not have enough time to properly decode the compressed video output before it is shown to the player. This causes the video quality of the game to drop accordingly. Video streaming services like Netflix and Youtube must also deal with this problem, but have the crucial advantage of predictability – video progresses in a linear fashion, and can thus be preemptively loaded onto the user’s PC".(good old buffering) In summary, the main challenges to cloud gaming infra are input lag; video compression; non-linear video progression (which means it can’t be buffered like in film streaming). Every time a Hulu video stops to load in the middle of a scene or an iTunes song refuses to play, infrastructure is failing and, through this failure, is being made visible. "Almost all big-budget “AAA” games require players to develop context-specific skills (such as aiming attacks, timing jumps, or dodging obstacles) to overcome rule-based challenges, an experience that is easily disrupted by the infrastructural problems caused by game streaming. (...) When infrastructure becomes failure, the player fails as well". Generative failure: "One of the digital games used in the marketing for Nvidia’s Geforce Now, PLAYERUNKNOWN’S BATTLEGROUNDS (PUBG), is a competition. 100 unarmed players drop into an island, where they must scavenge for equipment and weapons. The last surviving person wins. Players can be taken down with only a handful of shots, and the rush to claim limited resources regularly leads to panicked firefights. In these moments, players can only rely on their skill at the game: their ability to react to danger, to use the right equipment and, most importantly, to aim quickly and accurately. However, in order to rely on their skill, players must also rely on their PC’s ability to receive and process their inputs – or, in the case of a cloud streaming service, for their remote GPU’s ability to receive and process their inputs". "The amount of time between input and action needed to cause this perceptual dissonance is around 200 ms for most people (Andres et al., 2016), but dedicated members of the PC gaming community consider input lag over 100 ms unacceptable (Display Lag, n.d.)". Some good insights: "While infrastructure has been described by Parks (2015: 355) as ‘stuff you can kick’, a reference to the material nature of distribution systems, this is an example of infrastructure kicking back. Input lag and other network issues exert a material force upon people, creating a challenge that exists outside of the game-world and that cannot be overcome within the game. With cloud streaming, if the network fails, the player fails. Failure begets failure". So we can say infrastructure is kicking back also through analyses of waste and carbon footprints associated to data centre technology scaling up. Infrastructures are most of the times stuff you can kick, but they can also kick back due to the natural resources they require. How to compensate for it is one of the big questions posed by the industry's struggles towards sustainability. "Though 100 players enter every match, only one can ever emerge the victor. The rest will fail, learn from their mistakes, and try again. Over time, players become more skilled at the game, and more likely to win any given match. (...) This cycle of failure is the primary draw of skill-based games. Players submit willingly to this ‘consensual failure’ in order to improve themselves and, with enough play, to achieve a feeling of personal growth and achievement. Crucially, the ability for players to improve and succeed is contingent on failure being conquerable – the rules of the game must be consistent and comprehensible". The future of cloud gaming: Any streaming company that wants to be accepted by players has to prevent infrastructural failure from causing player failure. "So can Nvidia’s GeForce Now and the other new attempts at cloud gaming avoid failure in a market that has been historically defined by failure? Unless these companies plan on laying down fiber optic cables across the country, or on building data centres in every American city, then no." He was not counting with the fact that the infrastructural assemblages for gaming (among other things) would be provided by big tech and IT conglomerates entering the sector. The infrastructure does not need to be provided by the game industry or game hardware companies. Everyone in game development and publishing is now lagging behind or, to put it with more precision, depending on the infrastructure and models provided by such conglomerates... See the case of Amazon and Google entering the cloud gaming market. "What’s more, companies hoping to remotely stream games have to contend with the entities that actually control network infrastructure in North America: internet service providers. Broadband caps enforced by companies like Sprint and AT&T are another obstacle to fast, reliable game streaming, and would affect users regardless of their location or access to fiber-optic cabling". Very EU-Can-based thinking. Besides, he did not see it coming, but it was precisely what happened. AWS and Alphabet are capable of providing such infrastructure for highly demanding IT service markets. Developers would not have to contend with the entities controlling the infrastructure of networked computing in the cloud, as they have already been swallowed up by them. It is more of a case of mutually orienting each other now, perhaps. An interesting suggestion: "Turn based games avoid issues with input lag by not running in ‘real time’, and role-playing games often move at a slow enough pace that mild latency would only have a negligible effect on game performance". But then not all competition-based games should be understood the way he puts it, I think: "By removing skill-based challenges and focusing instead on exploration, decision making, and player experiences, non-skill-based games actively challenge the violent, competition-oriented games that serve as the status-quo in the digital games industry". His concluding remarks: "If game streaming could work, then it would have to be in a very different cloud from the one we have now. A cloud that is less about centralization and control, and one that is more concerned about experience and access. One that understands the game cannot simply be removed from the player, separated over hundreds of kilometers, and made vulnerable to the invasions of the real". Playing videogames in the countryside in Rio Grande do Sul in the late 1990's had more epistemological significance than one could initially expect. As a sub-tropical region of Brazil, Rio Grande do Sul is known for its temperate climate with very well defined stations - often mild cold in the Winter and a very hot and dry (and nowadays longer) Summer season. In the north-western parts of the State, such as my hometown, Summer nights can be very extenuating. Low middle class teenagers (as myself at the time) could not expect to spend too much time in air conditioning, as energy bills could be responsible for a high percentage of the budget of a household -- often people would gather in one air conditioned room and perform the same activity together for the couple of hours the machine would be turned on. Playing videogames was hardly one of such activities, since it was (by then) very much relegated to a teenager activity. Playing was then restricted to late hours in the night, when the temperature was relatively milder and A/C would not need to be turned on anymore. I am relying here on a personal recollection to emphasise that the relationship between gaming and heat can already be noticed on the very small scale of personal, individual perception: often the old Playstation 1 console would just shut down and stop working for hours due to overheating. This was a condition inflicted by the environment, since the console would rarely shut down when playing with the A/C turned on. Although I am not aware of how exactly the Playstation heat prevention system functioned at the time, this technical feature has a very simple explanation in the case of computers: in order to avoid compromising or damaging its internal components, computer motherboards are loaded with a basic operating system called a BIOS that will make a computer shut down if the CPU temperature surpasses a certain expected level. The exact shut down temperature will vary based on the BIOS settings, but it generally ranges from 70 to 100 degrees Celsius.

This is just a localised, small-scale example of how gaming computers require constant cooling to perform adequately. But what can we say when game processing and storage are not performed at one's home, but in specifically designed facilities like Data Centres and Server Farms, such as in the case of Cloud Gaming? As we know, Cloud Gaming allows users to store and run games on a remote graphics processing unit (GPU) housed in a Data Centre. The video output of these games is then streamed to any personal computer, with inputs of a multitude of players sent back to the cloud server over the internet (Willett 2019). Strategies to cool down Data Centres vary of course, as they are designed and developed in several different contexts, and companies often find solutions which are more adequate to regional specificities - such as geographic location, the efficiency of local energy grids, size and scale of operations, just to mention a few among a myriad of aspects. But we can observe how the scale of these resource and energy-intensive activities sometimes require pretty eccentric approaches from system designers and geoengineers alike in order to try cooling down these facilities. Server halls have been built in underground geological repositories; bunkers from the Cold War era have been repurposed with the goal of maintaining a fire-isolated (and also privately secured) position; Scandinavian nation-states have been advertising cold weather as a resource for building "more sustainable" data centres in snowy regions (Vondarau, 2019), which require that less electrical energy is drawn for artificial cooling; and servers have also been installed underwater for the same reason. The more traditional "solution" besides A/C, though, is just using water from nearby hydrographical sources to cool down server rooms (liquid cooling) -- which can leverage the higher thermal transfer properties of water or other fluids to cool the facilities. This is much more energy-efficient than air conditioning, although it of course also impacts local water supplies. As Star and Ruhleder (1996, p.113) famously put it, while infrastructures are often concealed from sight due to their very endemic role of performing background functions in modern societies, they are often brought to the surface of public attention only when they fail. This can be said to be the case of the recent succession of fires involving Data Centre facilities in several locations around the world. Although companies tend to be very discreet (if not plainly opaque) about the conditions and reasons that allowed fire to unleash in their facilities, it is hardly questionable that the role of Data Centres as implicit infrastructures for the functioning of contemporary societies becomes highly noticeable when they are set ablaze. Not only is stored data lost, but all kinds of services are suddenly paralysed or shut down. In the case of gaming, we can observe what happened when the OVHcloud SBG2 Data Centre in Strasbourg burnt down. Twenty five of the European servers of sandbox survival game Rust were destroyed by the fire, and developers Facepunch Studios were not able to restore the lost data. While the physical assemblages of infrastructure burnt in Strasbourg, the secret items, collectible items and other sorts of features disappeared along with the players' monthly saved progress in the virtual worlds of the game. Chess players took it in their stride when servers of the free Lichess service were lost. "Several of the Lichess servers burnt down in a data centre fire tonight", the service tweeted. "No-one was harmed. Thanks to our zealous sysadmin and his backups, all we lost was 24h of puzzle history". The somewhat frugal data that was lost due to the fire contrasts with what these calamitous situations highlight in terms of their elemental entanglements: in the ground, the cloud is actually pretty dense. It took six hours for 100 firefighters to bring the fire in the 500 square metres data centre under control, using 44 fire fighting vehicles, including a pump ship on the Rhine (Judge 2022). Moreover, as revealed by Data Centre Dynamics (DCD), an organisation which promotes best practices in data centre engineering, most Data Centres are designed to be difficult to switch off, as accidental shut offs would bring services down. Since power could not be cut off through a single switch, firefighters took nearly three hours just to get the facility's power cut off, making the electrical fire spread quicker through the location. The buildings had wooden ceilings built with materials designed to resist fire for around one hour, but since the energy could not be switched off quickly, the power room kept burning while the fire spread. Hereby the fire reveals an important principle of regularly working cloud infrastructures: the need and the designing for constant availability -- the reason first and foremost why power could not be switched off quickly in the OVHcloud SBG2 episode. How such an imperative gets articulated with the principles of environmental sustainability can only be, nonetheless, a question for much heated discussion. A less noticeable issue though (perhaps because less advertised by the very cloud services) refers to the question of scale. Not only is the fire scaled up by the massive amount of servers performing highly intensive processing under respectively high temperatures in the same space, but also at the same time. This growth in simultaneous energy demand is perhaps even less visible to players, but gets comparatively shocking in scale (a big fire, for instance), especially if we take into consideration that players like 12-year old me would have a very direct experience of the thermal nature of gaming through local shut down, but rarely would experience such a drastic episode as a half mile-long fire. We can see that, although not experienced by the immediate perception of players, the dimension of incidental environmental breakdowns unsurprisingly increases when games become large-scale media infrastructures. Together with the outsourcing of computing power and data processing, cloud gaming outsources the thermal properties of the medium. The computer becomes much less apparent to players, while it may be increasingly exchanging waste heat somewhere else in a different site, together with a large number of machines, in a large number of rooms, which may have a large-scale interaction with other non-computational systems such as the metabolism of local water supplies. In a relatively small 1 megawatt data centre (that uses enough electricity to power 1,000 houses), these traditional types of cooling would use 26 million litres of water per year (Ashtine & Mitton, 2021). This is why the transition of gaming from a local-based activity to a cloud-based service should also be analysed through a material lens considering the thermal transfers it encompasses. This is why observing the heat of gameplay modes matters. Digital media has for long been described as ephemeral, immaterial, and cold – all adjectives which are given even more impulse through the metaphors often used to explain outsourced, synchronous networked computing – the cloud. A more careful observer would perceive how the regulation of temperature sets conditions for how the matter of media technologies takes shape and circulates through the world (Starosielski, 2016, p.305), conditions in which “non-mediatic” or “premediatic” materialities can be transformed into elements of mediation (Parikka, 2013, p.70; 2015, p.4). Whether these elements result in the slow, cumulative increase of waste heat or the wild and radical expenditure of a bursting fire is not only a matter of contingencies, but also of design. Nonetheless, if current practices in infrastructure design are not making the thermal conditions of organic environments milder, but instead making only the relationship itself more opaque, as Peter Krapp (2015) notices in his article on Polar Media, "it pertains to the realm of fiction the role of exposing our senses to the paradoxes and risks". In this case we should regard the risks of ignoring media’s endemic relationship with temperature. Krapp reminds us of a passage in Thomas Pynchon’s 2013 novel, Bleeding Edge, when one character states that "more and more servers are put together in the same place putting out levels of heat that quickly become problematic unless you spend the budget on A/C”. The solution his partner suggests in the novel is “to go north, set up server farms where heat dissipation won’t be so much of a problem, take (...) power from renewables like hydro or sunlight, use surplus heat to help sustain whatever communities grow up around the data centers. Domed communities across the Arctic tundra”. To which he is answered: “My geek brothers! the tropics may be OK for cheap labor and sex tours, but the future is out there on the permafrost, a new geopolitical imperative—gain control of the supply of cold as a natural resource of incomputable worth, with global warming, even more crucial”. The imagery evoked by Pyncheon’s character is of course voluntarily cynical, but it may also strike the sensitive nerve! Mäntsälä, a finnish town of 20,000 inhabitants located 65 kilometres north of Helsinki, was proclaimed decarbonized by its local district heating and energy monopoly, Nivos (Muukka, 2018). It did so with the help of a data centre launched in 2016 by Yandex, the Russian platform for internet search, transportation and geolocation services (normally referred as ‘the Russian Google’). The waste heat produced by Yandex data centres was outsourced to the house heating system in Mäntsälä, reducing the municipal usage of natural gas negotiated with Russia. The electricity that powers Yandex data centres is not intrinsically environmentally friendly, though, as the energy it turns into heat is sourced from other places in Europe, where it creates carbon emissions and other forms of pollution. Mäntsälä and its energy monopoly picture themselves as clean and carbon-free by means of the infrastructural outsourcing of emissions and forms of pollution to other places – akin to the displacement of energy consumption from personal home computers to centralised cloud computing facilities. Therefore, as cloud gaming emerges as thermal media, the energy demands of data centres require transparency, debate, and regulation in context of policy development, since as large-scale infrastructural systems their implications go way beyond the local contexts where they are experienced as a service. Once again the material properties of infrastructure may be better disclosed when they fail, when the conveniences they provide to our daily experience are interrupted. It seems after all that infrastructural failure, just like the climate crisis with its heat waves, water shortages and so on and so on, just cannot be completely outsourced. Some notes I took while reviewing Susan Leigh Star's seminal article 'The Ethnography of Infrastructure'. In American Behavioral Scientist, Vol. 43, No. 3, November/December 1999, 377-391.

In her work describing methodological aspects of infrastructure studies or, as she prefers to name it, the ‘study of boring things’, Leigh Star highlighted the difficulty and strangeness of studying infrastructures: “struggles with infrastructure are built into the very fabric of technical work”. According to her, such studies do not incorporate only the usual strangeness that is habitual to anthropological work. Rather it is a second-order strangeness, of an embedded sort – “that [strangeness] of the forgotten, the background, the frozen in place”. She was referring to these things we often do not considered as important: “as well as the important studies of body snatching, identity tourism, and transglobal knowledge networks, let us also attend ethnographically to the plugs, settings, sizes, and other profoundly mundane aspects of cyberspace, in some of the same ways we might parse a telephone book”. We can see just how technical media of each time participate in the perception of the mundanity of each of these artifacts, such as computer settings or telephone books. So “the ecology of the distributed high-tech spaces is profoundly impacted by the relatively understudied infrastructure that permeates all its functions. Study a city and neglect its sewers and power supplies (as many have), and you will miss essential aspects of distributional justice and planning power” (Latour & Hermant, 1998). And she remarks: “Perhaps if we stopped thinking of computers as information highways and began to think of them more modestly as symbolic sewers, this realm would open up a bit”. The article also highlights some important methodological tools: Infrastructural inversion (Bowker, 1994) -> “foregrounding the truly backstage events of work practice to help describe the history of large-scale systems”. Bowker, G. 1994. Information mythology and infrastructure. According to Leigh Star & Ruhleder (1996), in their work on Worm Communities, INFRASTRUCTURES as technical systems have the following dimensions: a) Embeddedness; b) Transparency; c) Reach or scope; d) Learned as part of membership; e) Links with conventions of practice; f) Embodiment of standards; g) Built on an installed base; h) Becomes visible upon breakdown; i) Is fixed in modular increments, not all at once or globally (p.381-382). See more in: Leigh Star & Ruhleder (1996). Steps toward an ecology of infrastructure. Some particular notes on these dimensions: d) The taken-for-grantedness of artefacts and organisational arrangements is a sine qua non of membership in a community of practice (p. 381). e) Infrastructure both shapes and is shaped by the conventions of a community of practice (e.g., the ways that cycles of day-night work are affected by and affect electrical power rates and needs). Generations of typists have learned the QWERTY keyboard; its limitations are inherited by the computer keyboards and thence by the design of today’s computer furniture (Becker 1982) (p.381). f) Infrastructure does not grow out of nothing. It wrestles with the inertia of the installed base and inherits strengths and limitations from that base. Optical fibers run along old railroad lines; new systems are designed for backward compatibility, and failing to account for these constraints may be fatal or distorting to new development processes (Hanseth & Monteiro, 1996) (p.382). g) The normally invisible quality of working infrastructure becomes visible when it breaks; the server is down, the bridge washes out, there is a power blackout (p.382). Infrastructure and Methods: The article also highlights that the methodological implications of a relational approach to infrastructure are considerable: Fieldwork (...) transmogrifies to a combination of historical and literary analysis, traditional tools like interviews and observations, systems analysis, and usability studies (p.382). People make meanings based on their circumstances, and (...) these meanings would be inscribed into their judgements about the built information environment (p.383). The labor-intensive and analysis-intensive craft of qualitative research, combined with a historical emphasis on single investigator studies, has never lent itself to ethnography of thousands. (...) The scale question remains a pressing and open one for methodological concerns in the study of infrastructure. (...) Yet, I know of no one who has analysed transaction logs to their own satisfaction, never mind to a standard of ethnographic veridicality (p.383-384). Tricks of the Trade: In this section, Leigh Star examines tricks she developed in her studies which can be helpful for analysing infrastructure and unraveling some of its features.

The thorny problem of indicators: In Leigh Star’s view, one can read information infrastructure either as: a) A material artifact constructed by people (physical and pragmatic properties); b) a trace or record of activities (transaction logs, email records, classification systems as evidence of cultural decisions, conflicts and values); c) a veridical representation of the world (here the information system is tacitly taken as if it was a complete enough record of actions). These are three different orders of information that researchers can access. It is easy to elide these functions of indicators, so it is VERY important to cultivate an awareness of these differences and to disentangle them. I.e.: “Films about rape may say a great deal about a given culture’s acceptance of sexual violence, but they are not the same thing as police statistics about rape, nor the same as phenomenological investigations of the experience of being raped” (p.388). Not to confuse precision and validity in the creation of a system of indicators and categories. They are two different indicators. Bridges and barriers: “At least since Winner’s (1986) classic chapter, ‘Do Artifacts Have Politics?’ the question of whether and how values are inscribed in technical systems has been a live one in the communities studying technology and its design” (p.388). In this study, Winner observed how a behind-the-scenes policy decision was made to make automobile bridges over parkway in New York low in height. With this, public transport such as buses could not pass, only private small vehicles. Results: Poor people were barred from richer suburbs of Long Island, not by policy, but by design. This is just one example. Matters of accessibility, for instance, rely on that as well. The same with computers and IT infrastructure in poor countries or regions: it would have the infra funded, but perhaps the electricity would be expensive. The same now happens in relation to sustainable systems for media infrastructure and global injustices. Building the infra and not fixing the energy systems will just make the differences in economics larger. She concludes that, still in the 90’s, that “applying the insights, methods, and perspectives of ethnography to this class of issues is a terryfying and delightful challenge for what some would call the information age”. |

AuthorThis blog is meant to provide a space for discussing the geophysical as well as the the imaginary entanglements between media infrastructures and organic environments. In the coming months, it will be dedicated to my current project, Cloud Gaming Atlas, which is particularly interested in observing and interrogating the infrastructures developed for cloud gaming initiatives in regard to their environmental implications. Additionally, it should also gather information about events and publications related to my project at the Zukunftskolleg and the Department of Literature, Art and Media of the University of Konstanz. Archives

January 2024

Categories |

RSS Feed

RSS Feed